|

Size: 2405

Comment: Moving vector pages

|

← Revision 24 as of 2026-02-02 17:05:38 ⇥

Size: 4803

Comment: Moving to inner product page

|

| Deletions are marked like this. | Additions are marked like this. |

| Line 1: | Line 1: |

| ## page was renamed from LinearAlgebra/VectorMultiplication = Vector Multiplication = |

= Vector Operations = |

| Line 4: | Line 3: |

| There are several ways to conceptualize '''vector multiplication'''. | '''Vector operations''' can be expressed numerically or geometrically. |

| Line 12: | Line 11: |

| == Addition == Vectors are added numerically as piecewise summation. Given vectors a⃗ and b⃗ equal to ''[1,2]'' and ''[3,1]'', their sum is ''[4,3]''. Vectors are added geometrically by joining them tip-to-tail, as demonstrated in the below graphic. {{attachment:add.png||height=200px}} === Properties === These two views of vector addition also demonstrate that addition is commutative. Furthermore, it follows that if a⃗ + b⃗ = c⃗, then c⃗ - b⃗ = a⃗. ---- == Scalar Multiplication == Multiplying a vector by a scalar is equivalent to multiplying each component of the vector by the scalar. Geometrically, scalar multiplication is scaling. ---- |

|

| Line 14: | Line 43: |

| Two vectors of equal dimensions can be multiplied as a '''dot product'''. The notation is ''a ⋅ b''. | Vectors of equal dimensions can be multiplied as a '''dot product'''. In calculus this is commonly notated as ''a⃗ ⋅ b⃗'', while in [[LinearAlgebra|linear algebra]] this is usually written out as ''a^T^b''. |

| Line 16: | Line 45: |

| It is also known as a '''scalar product''' because the multiplication yields a single scalar. Generally, given two vectors ('''''a''''' and '''''b''''') with ''n'' dimensions, the dot product is computed as: {{attachment:dot1.svg}} Concretely, if '''''a''''' and '''''b''''' have three dimensions (labeled ''x'', ''y'', and ''z''), the dot product can be computed as: {{attachment:dot2.svg}} |

In ''R^3^'' space, the dot product can be calculated numerically as ''a⃗ ⋅ b⃗ = a,,1,,b,,1,, + a,,2,,b,,2,, + a,,3,,b,,3,,''. More generally this is expressed as ''Σa,,i,,b,,i,,''. |

| Line 34: | Line 55: |

| Geometrically, the dot product is ''||a⃗|| ||b⃗|| cos(θ)'' where ''θ'' is the angle formed by the two vectors. This demonstrates that dot products reflect both the [[Calculus/Distance|distance]] of the vectors and their similarity. The operation is also known as a '''scalar product''' because it yields a single scalar. Lastly, in terms of linear algebra, ''a ⋅ b'' is equivalent to multiplying the distance of ''a'' by the [[Calculus/Projection#Scalar_Projection|scalar projection]] of ''b'' into the column space of ''a''. Because a vector is clearly of [[LinearAlgebra/Rank|rank]] 1, this column space is in ''R^1^'' and forms a line. As a result of this interpretation, this operation is ''also'' known as a '''projection product'''. The dot product can be used to extract components of a vector. For example, to extract the X component of a vector a⃗ in ''R^3^'', take the dot product of it by the [[Calculus/UnitVector|unit vector]] ''î''. |

|

| Line 39: | Line 68: |

| * ''a⃗ ⋅ b⃗ = b⃗ ⋅ a⃗'' * ''a^T^b = b^T^a'' |

|

| Line 40: | Line 71: |

| The dot product is 0 only when ''a'' and ''b'' are [[LinearAlgebra/Orthogonality|orthogonal]]. | The ''cos(θ)'' component of the alternative definition provides several useful properties. * The dot product is 0 only when ''a'' and ''b'' are [[Calculus/Orthogonality|orthogonal]]. * The dot product is positive only when ''θ'' is acute. * The dot product is negative only when ''θ'' is obtuse. |

| Line 42: | Line 76: |

| The dot product effectively measures how ''similar'' the vectors are. === Usage === The dot product is also known as the '''projection product'''. The dot product of ''a'' and ''b'' is equivalent to multiplying the distance of ''a'' by the distance of the [[LinearAlgebra/Projections#Vectors|projection]] of ''b'' into ''C(a)'', the column space of ''a''. (Because a vector is clearly of [[LinearAlgebra/Rank|rank]] 1, this space is in ''R^1^'' and forms a line.) Trigonometrically, this is ''||a|| ||b|| cos(θ)''. This provides a geometric intuition for why the dot product is 0 when ''a'' and ''b'' are orthogonal: there is no possible projection, and necessarily multiplying by 0 results in 0. ---- == Inner Product == The '''inner product''' is a generalization of the dot product. Specifically, the dot product is the inner product in Euclidean space. The notation is ''⟨a, b⟩''. |

The linear algebra view corroborates this: when ''a'' and ''b'' are orthogonal, there is no possible projection, so the dot product must be 0. |

| Line 66: | Line 84: |

| Two vectors of 3-dimensional vectors can be multiplied as a '''cross product'''. The notation is ''a × b''. | Two vectors in ''R^3^'' space can be multiplied as a '''cross product'''. The notation is ''a⃗ × b⃗'' and it is calculated as the determinant of the two vectors together with a vector of ''[î ĵ k̂]'' (referring to the [[Calculus/UnitVector|unit vectors]]): {{attachment:cross.svg}} Recall that the determinant of a matrix does not change with [[LinearAlgebra/Transposition|transposition]], so this 3 by 3 matrix can be constructed either of columns or rows. The cross product returns a vector that is orthogonal to both a⃗ and b⃗, and reflects how dissimilar the vectors are. Geometrically, the cross product is ''||a⃗|| ||b⃗|| sin(θ) n̂'' where ''θ'' is the angle formed by the two vectors and ''n̂'' is the unit vector normal to the two vectors. |

| Line 72: | Line 98: |

| Cross product multiplication is '''anti-commutative''': ''a × b = -b × a''. | Cross product multiplication is '''anti-commutative''': ''a⃗ × b⃗ = -b⃗ × a⃗''. |

| Line 74: | Line 100: |

| The cross product effectively measures how ''dissimilar'' the vectors are. | ---- |

| Line 78: | Line 104: |

| === Usage === | == Outer Product == |

| Line 80: | Line 106: |

| The cross product is a vector that is orthogonal to both ''a'' and ''b''. | Vectors of any sizes can be multiplied as an '''outer product'''. In calculus this is commonly notated as ''a⃗ ⊗ b⃗'', while in [[LinearAlgebra|linear algebra]] this is usually written out as ''ab^T^''. If ''a'' is a column of size ''m x 1'' and ''b'' is a row of size ''1 x n'', then the outer product is of size ''m x n''. === Properties === * ''a⃗ ⊗ b⃗ = (b⃗ ⊗ a⃗)^T^'' * ''(a⃗ + b⃗) ⊗ c⃗ = (a⃗ ⊗ c⃗) + (b⃗ ⊗ c⃗)'' * ''c⃗ ⊗ (a⃗ + b⃗) = (c⃗ ⊗ a⃗) + (c⃗ ⊗ b⃗)'' * ''d(a⃗ ⊗ b⃗) = (da⃗) ⊗ b⃗ = a⃗ ⊗ (db⃗)'' * ''(a⃗ ⊗ b⃗) ⊗ c⃗ = a⃗ ⊗ (b⃗ ⊗ c⃗)'' Because every column in an outer product is a linear combination of ''a⃗'', there is always multicolinearity. Therefore an outer product is always of [[LinearAlgebra/Rank|rank]] 1. |

Vector Operations

Vector operations can be expressed numerically or geometrically.

Contents

Addition

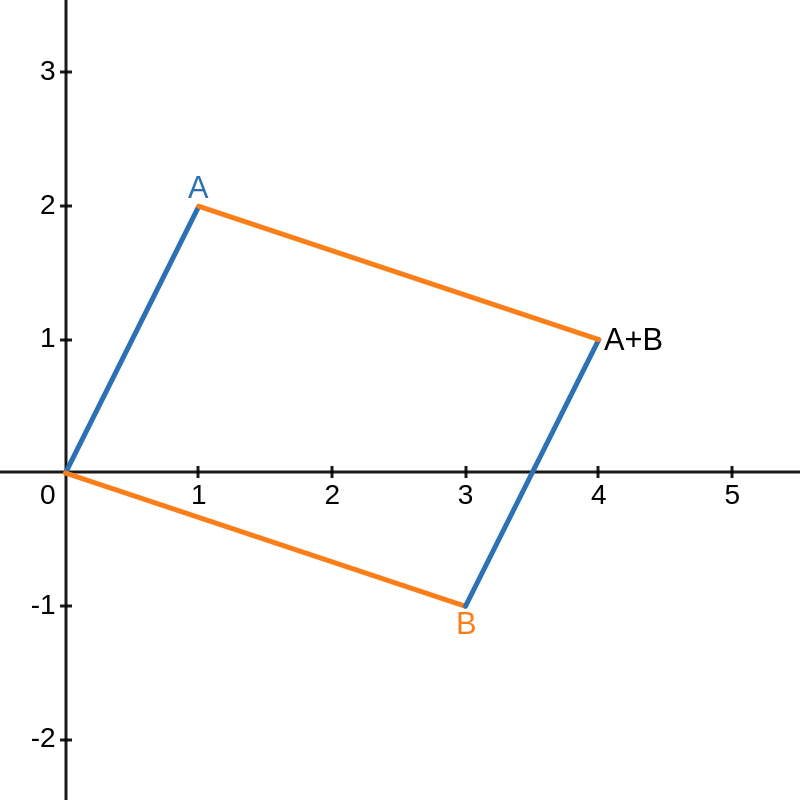

Vectors are added numerically as piecewise summation. Given vectors a⃗ and b⃗ equal to [1,2] and [3,1], their sum is [4,3].

Vectors are added geometrically by joining them tip-to-tail, as demonstrated in the below graphic.

Properties

These two views of vector addition also demonstrate that addition is commutative.

Furthermore, it follows that if a⃗ + b⃗ = c⃗, then c⃗ - b⃗ = a⃗.

Scalar Multiplication

Multiplying a vector by a scalar is equivalent to multiplying each component of the vector by the scalar.

Geometrically, scalar multiplication is scaling.

Dot Product

Vectors of equal dimensions can be multiplied as a dot product. In calculus this is commonly notated as a⃗ ⋅ b⃗, while in linear algebra this is usually written out as aTb.

In R3 space, the dot product can be calculated numerically as a⃗ ⋅ b⃗ = a1b1 + a2b2 + a3b3. More generally this is expressed as Σaibi.

julia> using LinearAlgebra julia> # type '\cdot' and tab-complete into '⋅' julia> [2,3,4] ⋅ [5,6,7] 56

Geometrically, the dot product is ||a⃗|| ||b⃗|| cos(θ) where θ is the angle formed by the two vectors. This demonstrates that dot products reflect both the distance of the vectors and their similarity.

The operation is also known as a scalar product because it yields a single scalar.

Lastly, in terms of linear algebra, a ⋅ b is equivalent to multiplying the distance of a by the scalar projection of b into the column space of a. Because a vector is clearly of rank 1, this column space is in R1 and forms a line. As a result of this interpretation, this operation is also known as a projection product.

The dot product can be used to extract components of a vector. For example, to extract the X component of a vector a⃗ in R3, take the dot product of it by the unit vector î.

Properties

Dot product multiplication is commutative.

a⃗ ⋅ b⃗ = b⃗ ⋅ a⃗

aTb = bTa

The cos(θ) component of the alternative definition provides several useful properties.

The dot product is 0 only when a and b are orthogonal.

The dot product is positive only when θ is acute.

The dot product is negative only when θ is obtuse.

The linear algebra view corroborates this: when a and b are orthogonal, there is no possible projection, so the dot product must be 0.

Cross Product

Two vectors in R3 space can be multiplied as a cross product. The notation is a⃗ × b⃗ and it is calculated as the determinant of the two vectors together with a vector of [î ĵ k̂] (referring to the unit vectors):

Recall that the determinant of a matrix does not change with transposition, so this 3 by 3 matrix can be constructed either of columns or rows.

The cross product returns a vector that is orthogonal to both a⃗ and b⃗, and reflects how dissimilar the vectors are.

Geometrically, the cross product is ||a⃗|| ||b⃗|| sin(θ) n̂ where θ is the angle formed by the two vectors and n̂ is the unit vector normal to the two vectors.

Properties

Cross product multiplication is anti-commutative: a⃗ × b⃗ = -b⃗ × a⃗.

Outer Product

Vectors of any sizes can be multiplied as an outer product. In calculus this is commonly notated as a⃗ ⊗ b⃗, while in linear algebra this is usually written out as abT. If a is a column of size m x 1 and b is a row of size 1 x n, then the outer product is of size m x n.

Properties

a⃗ ⊗ b⃗ = (b⃗ ⊗ a⃗)T

(a⃗ + b⃗) ⊗ c⃗ = (a⃗ ⊗ c⃗) + (b⃗ ⊗ c⃗)

c⃗ ⊗ (a⃗ + b⃗) = (c⃗ ⊗ a⃗) + (c⃗ ⊗ b⃗)

d(a⃗ ⊗ b⃗) = (da⃗) ⊗ b⃗ = a⃗ ⊗ (db⃗)

(a⃗ ⊗ b⃗) ⊗ c⃗ = a⃗ ⊗ (b⃗ ⊗ c⃗)

Because every column in an outer product is a linear combination of a⃗, there is always multicolinearity. Therefore an outer product is always of rank 1.